By Alex Teague, Principal Vulnerability Researcher: IoT & Operational Technology Security

There is a peculiar irony at the heart of modern industrial and critical infrastructure security. Organisations that would never dream of deploying an enterprise server without patch management, endpoint detection, and network segmentation are routinely running programmable logic controllers, remote terminal units, and building management systems that were designed with security as an afterthought. As I start to assess some of the newer environments classified as CNI such as data centres, I notice more and more the divide and impact at the intersection of the physical and digital worlds, controlling everything from water treatment and power distribution to HVAC systems and factory production lines are, in many cases, profoundly and systematically insecure.

This is the landscape that occupies my working life, when I am not performing security architecture reviews of these environments. I am working as a vulnerability researcher specialising in Internet of Things (IoT) and Operational Technology (OT) devices. I spend my days and, if I am honest, a fair number of my nights pulling apart embedded firmware, reverse-engineering proprietary protocols, and probing the attack surface of devices that most security professionals have never examined in detail. The findings are frequently alarming. But they are also, I believe, essential to moving the industry forward.

In this post, I want to explain what this research actually involves, walk through the principal vulnerability categories I encounter in this space, and make the case in practical terms for why this kind of work delivers genuine, measurable value to the organisations that engage with it.

Setting the Scene: What Makes IoT and OT Security Different?

Before diving into vulnerability categories, it is worth establishing why IoT and OT security is a distinct discipline rather than simply an extension of enterprise IT security.

Operational technology refers to the hardware and software that monitors and controls physical processes. This encompasses industrial control systems (ICS), SCADA (Supervisory Control and Data Acquisition) systems, distributed control systems (DCS), programmable logic controllers (PLCs), remote terminal units (RTUs), and a sprawling ecosystem of field devices, sensors, and human-machine interfaces (HMIs). The Internet of Things, whilst often conflating consumer devices such as smart speakers with industrial equipment, in a professional context typically refers to networked embedded devices IP cameras, smart meters, medical devices, building automation controllers, and the like.

What makes these environments categorically different from enterprise IT is a combination of factors that compound one another to create unique risk:

Longevity. An enterprise server might have a useful life of five to seven years. An industrial PLC or RTU might be expected to operate for twenty to thirty years, often well beyond the vendor’s support lifecycle. Vulnerabilities baked into a device’s design in 2005 may still be exploitable in 2035.

Legacy protocols. Much of the communication in OT environments relies on protocols developed in the 1970s, 1980s, and 1990s Modbus, DNP3, PROFIBUS, which were designed for reliability and determinism rather than security. Many have no native authentication or encryption. It is very difficult to retrospectively add security to a inherently insecure protocol as we’ve seen with protocols like CAN.

Convergence with IT networks. The once-prevailing assumption that OT networks were “air-gapped” from corporate IT and the internet has been comprehensively dismantled by the march of digital transformation, remote access requirements, and supply chain connectivity. Today, these networks are increasingly interconnected, and the attack paths that once seemed theoretical are now well-documented and actively exploited.

Safety implications. Unlike an enterprise breach, where the immediate consequences are typically data loss and financial damage, a compromise of OT systems can cause physical harm to equipment, to the environment, and to human life. This raises the stakes of every vulnerability considerably.

Patch reticence. Even where patches are available, the operational constraints of OT environments the reluctance to take a production line or substation offline, the fear of introducing instability mean that patching rates are far lower than in IT environments. Vulnerabilities persist in production systems for years after they are disclosed.

It is also worth mentioning that in I.T systems we are primarily concerned with protecting data, in O.T system we are primarily concerned with protecting the process.

The Principal Vulnerability Categories

Drawing on my own research experience and the broader body of published work in this field including OT:ICEFALL, and the work of researchers at Claroty, Dragos and countless individual disclosures. The vulnerabilities I encounter tend to cluster into a set of recurring categories. Understanding these categories is essential both for researchers and for the organisations seeking to understand their exposure.

1. Insecure Engineering Protocols

Engineering protocols are the mechanisms by which engineering workstations communicate with PLCs, RTUs, and other field devices for configuration, programming, firmware updates, and diagnostics.

Many proprietary engineering protocols including those used by major vendors implement no authentication whatsoever. A device on the same network segment as a PLC can, without any credentials, read and modify the control logic running on that device, force it into stop mode, or manipulate process values. In OT:ICEFALL, this was identified as the single most prevalent vulnerability class.

The underlying issue is one of assumed trust. These protocols were designed with the assumption that anyone who could physically reach the engineering workstation was authorised. In an era when engineering networks are connected to corporate WANs, accessible via remote desktop solutions, and reachable via lateral movement from a compromised IT network, that assumption is no longer tenable.

In my own research, I have regularly encountered proprietary engineering protocols that lack authentication by design and offer no practical means of introducing it. With no source validation, and no resistance to replayed commands, these protocols extend implicit trust to anyone with network access.

What I look for: Packet capture and analysis of engineering protocol traffic, unauthenticated command execution, replay attack feasibility, the absence of source IP validation.

2. Weak Cryptography and Broken Authentication

Where authentication does exist in OT and IoT devices, it is frequently implemented poorly. This manifests in several distinct ways.

Hardcoded credentials remain endemic in this space. Devices ship from the factory with fixed usernames and passwords sometimes documented in publicly available manuals, sometimes discoverable through firmware analysis that cannot be changed by the end user, or change is not enforced. When these credentials are disclosed or independently discovered, every device of that model running the same firmware version becomes compromised.

Weak password policies are similarly widespread. Devices that do accept custom passwords frequently enforce no minimum complexity, no lockout after failed attempts, and no mechanisms to prevent credential stuffing. In embedded systems with limited processing power, brute-force attacks against login interfaces can be highly effective.

Cryptographic implementation failures take several forms. Some devices use deprecated algorithms MD5 for integrity checking, DES for encryption that are computationally breakable with modern hardware. Others use strong algorithms but implement them incorrectly: predictable initialisation vectors, reused nonces, static symmetric keys embedded in firmware, or certificates signed with keys that are identical across all devices of a given model.

Insecure token and session management is particularly prevalent in devices with web interfaces. Session tokens generated with insufficient entropy, tokens that do not expire, and the absence of CSRF protections are common findings.

One of the most worrying things that I come across is the implementation of cryptographic protections for firmware and communications but done so in ways that were demonstrably broken providing the appearance of security without the actual security. This is arguably more dangerous than the absence of security controls, because it can create false confidence.

What I look for: Firmware extraction and analysis for embedded credentials and cryptographic keys, TLS configuration assessment, entropy analysis of session tokens, certificate chain validation, protocol-level cryptographic analysis, password policy testing against all exposed interfaces.

3. Insecure Firmware Update Mechanisms

The ability to update firmware is, in principle, one of the most important security controls a device vendor can provide it is the mechanism by which vulnerabilities are patched. In practice, the firmware update mechanisms in many IoT and OT devices are themselves a significant attack vector.

The gold standard for firmware updates is a signed update package, where the device validates a cryptographic signature before applying the update, using a key whose private component is held securely by the vendor. In reality, many devices fall far short of this standard.

Some devices accept unsigned firmware updates entirely any image that conforms to the expected format will be applied without challenge. Others implement signature verification but use weak algorithms, or verify the signature in a way that can be bypassed (for example, by exploiting a parsing vulnerability in the update handler before the signature check is reached). Some devices perform the check but use a hardcoded, publicly known key, or a key that is shared across all devices and can therefore be extracted from any unit.

Discovering these flaws requires a detailed understanding of the update protocol, access to the firmware update tooling (often obtained through reverse engineering), and the ability to craft and test modified images.

Beyond code signing, there are related issues: updates delivered over unencrypted channels vulnerable to man-in-the-middle interception, update servers that accept requests without authenticating the requesting device, and rollback vulnerabilities that allow an attacker to downgrade a device to a version with known, unpatched vulnerabilities.

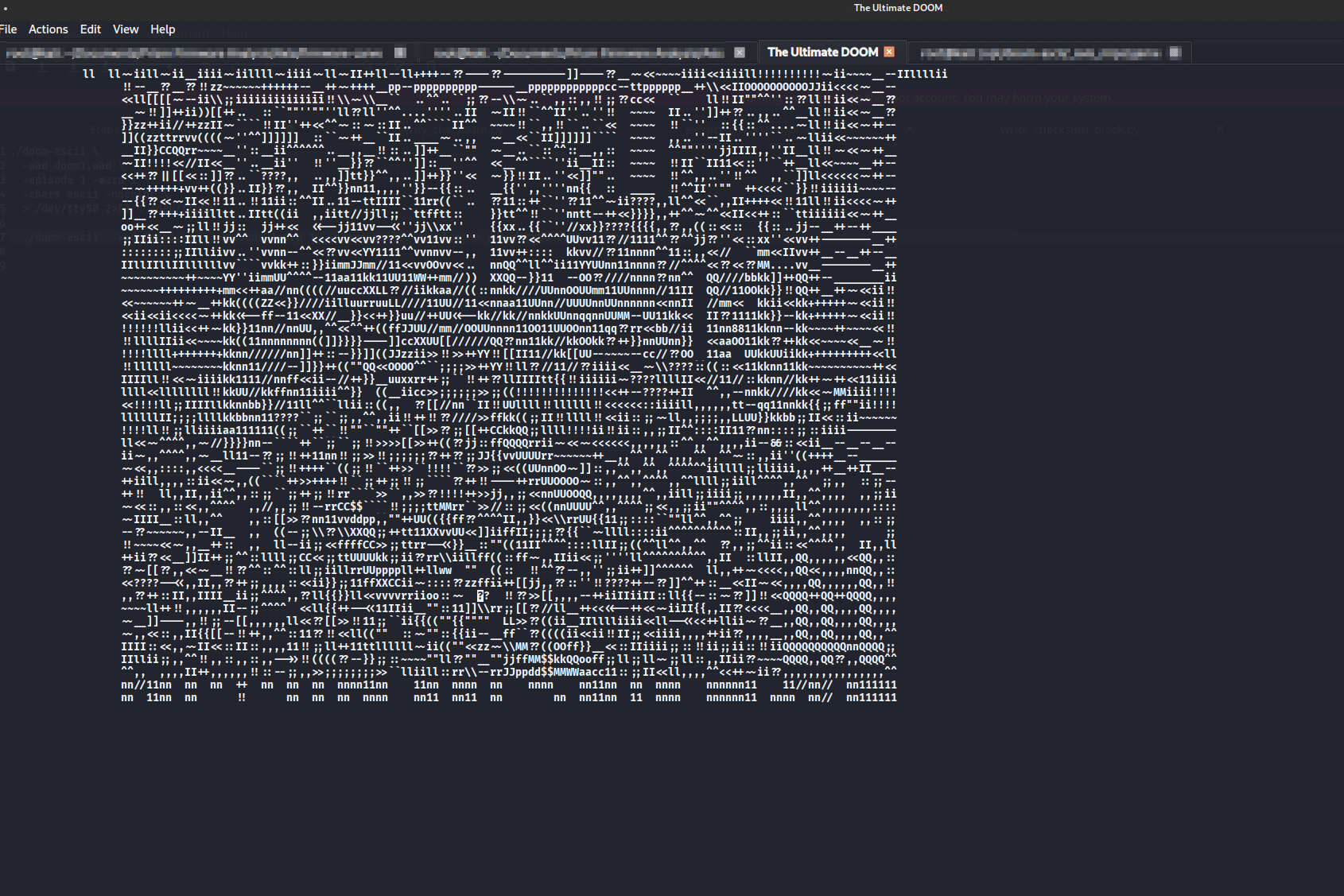

Figure 1 Running ASCII Doom on an OT device to prove to the client it was insecure

What I look for: Firmware acquisition via hardware interfaces (JTAG, UART, SPI flash) and network capture, cryptographic signature scheme analysis, update protocol dissection, rollback protection assessment; update server authentication testing.

4. Remote Code Execution via Native Functionality

This category is, in some respects, the most philosophically interesting and the most commercially sensitive. Some of the most impactful vulnerabilities in OT and IoT devices are not bugs at all in the conventional sense: they are the intended behaviour of the device, used in unintended ways.

Many PLCs and embedded controllers include the ability to execute native code ARM, x86, or MIPS machine code as part of their legitimate functionality. This capability exists to allow custom applications, high-performance data processing, and integration with third-party software. But when combined with an insecure engineering protocol that allows unauthenticated code uploads, or a web interface that accepts arbitrary file uploads, this becomes a mechanism for persistent, low-level compromise of the device. In practice an attacker who gains access to the engineering network can, in some cases, achieve root-level code execution on a PLC or RTU without needing to find or exploit a single vulnerability in the traditional sense.

Beyond this design-level issue, there are also memory corruption vulnerabilities that permit code execution through more traditional means: buffer overflows in protocol parsers, heap corruption in web server components, format string vulnerabilities in logging functions. Embedded systems running without modern memory protections no address space layout randomisation, no stack canaries, no executable space protection are particularly vulnerable to these classes of attack, and they remain common in OT devices where resource constraints and legacy codebases make it difficult to retrofit protections.

What I look for: Enumeration of native code execution capabilities, assessment of whether those capabilities can be accessed without authentication, fuzzing of protocol parsers and web interfaces, binary analysis for memory corruption vulnerabilities, assessment of binary hardening.

5. Denial of Service and Availability Attacks

In IT security, denial of service is typically treated as a lower-severity issue than data exfiltration or code execution. In OT environments, this is entirely different. A PLC that crashes mid-cycle can cause a production stoppage costing hundreds of thousands of pounds. An HMI that becomes unresponsive at a critical moment can prevent an operator from responding to an alarm condition. A network device that fails can isolate an entire plant segment.

The availability vulnerabilities I encounter in OT devices are diverse. Some are resource exhaustion issues. Some are protocol-level issues. Some are implementation bugs.

What is particularly concerning is that many OT devices do not recover automatically from a denial of service condition. A device that crashes may require a manual power cycle to recover. In some environments, achieving this level of disruption is the explicit goal of a threat actor.

What I look for: Protocol-level fuzzing for crash conditions, resource exhaustion testing, assessment of recovery behaviour after crash, identification of unauthenticated/undocumented commands with disruptive effects, network stack resilience testing.

END OF PART 1